Technology is necessary. But it’s not sufficient. You can buy all the tools in the world, but if you don’t have a strategy, you’ll just have expensive tools that don’t work together.

The organisations that will win aren’t the ones that block AI. They’re the ones that govern it. And that requires a framework, a way of thinking about the problem that goes beyond technology.

The Four Pillars of AI-Ready DLP Strategy

Pillar 1: Context-Aware DLP – Monitor What’s Actually Leaving

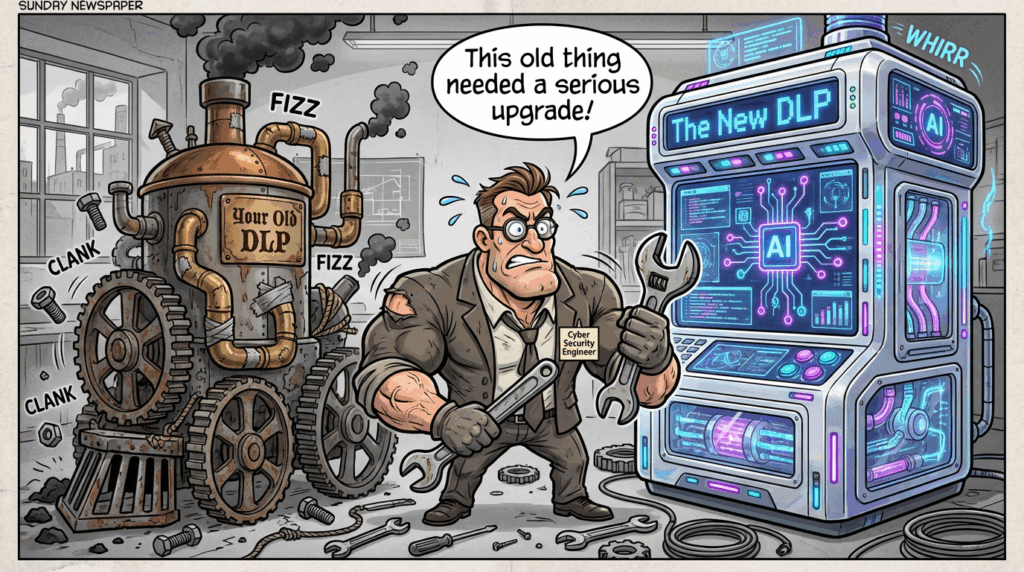

Traditional DLP is blind to the most dangerous channels: email, teams and then the browser. When an employee copies proprietary code into ChatGPT or pastes a financial forecast into Claude, your legacy DLP sees nothing. The data never touches your network. It never hits your email gateway.

Context-aware DLP changes this. It monitors what employees copy and paste into AI tools in real time, before the data reaches external LLMs. But it’s smarter than simple blocking as it understands context.

What this means:

- Real-time prompt inspection: Detect when sensitive data (source code, customer records, financial projections, API keys) is about to be pasted into ChatGPT, Claude, Gemini, or Copilot

- Semantic analysis, not regex: Understand that a paragraph describing an M&A deal is confidential, even if it doesn’t match a pattern

- Graduated responses: Allow, warn, or block based on data type, user role, and destination AI tool

- User coaching, not just blocking: When someone tries to paste customer data into ChatGPT Free, warn them and redirect them to an approved alternative.

Implementation strategy: Start with your highest-risk data types (source code, financial data, legal documents, customer records) and your most-used AI tools (ChatGPT, Claude, Copilot). Deploy browser-based DLP agents or extensions that monitor copy-paste activity without requiring heavy endpoint agents.

Tools to evaluate: Microsoft Purview (integrated with M365 Copilot), ForcePoint DLP

Pillar 2: Use Enterprise AI – Give employees a secure option

The fundamental problem with Shadow AI is that employees use free tools because they’re better, faster, and easier than approved alternatives. Block ChatGPT Free without providing an enterprise alternative, and you’ve just created a game of whack-a-mole. Employees will find the next tool.

The solution is not to block AI. It’s to provide better AI.

Enterprise AI tools like Microsoft 365 Copilot and Claude Enterprise offer:

- Data residency and compliance: Your data stays in your environment or in compliant data centres

- No model training: Your conversations don’t train public models

- Audit trails: Complete visibility into what was asked and what was answered

- Governance controls: Admins can set policies on what data can be used, which users can access the tool, and what outputs are allowed

- Better performance: Higher rate limits, priority processing, and custom fine-tuning

Implementation strategy:

- Assess your user base: Which teams use AI most? (Engineering, legal, finance, marketing)

- Pilot with high-value teams: Start with teams handling sensitive data or high-risk workflows

- Make it genuinely better: Higher limits, faster responses, better features than free alternatives

- Communicate clearly: Tell employees why you’re providing this tool and what it protects

- Measure adoption: Track migration from free tools to enterprise tools

The business case: A developer using ChatGPT Free might paste proprietary code. A developer using Claude Enterprise with audit trails and data residency guarantees won’t. The cost of one IP leak often exceeds the annual cost of enterprise AI for your entire engineering team.

Pillar 3: Data Handling Policies – Guide, Don’t Prescribe

Most data handling policies are written like laws: “AI is prohibited except in the following approved cases.” This approach fails because:

- Employees don’t read them

- They’re too rigid to adapt to new tools

- They create a culture of workarounds, not responsibility

Better approach: Guide with principles, not rules.

Your updated data handling policy should:

Define what data can go where:

- Green zone (approved for AI): General business questions, non-sensitive research, learning and development

- Yellow zone (requires approval): Customer data, financial projections, internal strategy documents

- Red zone (never): Source code with credentials, unreleased product roadmaps, M&A materials, personal employee data

Specify which tools are approved for which data:

- Approved for all data: Microsoft 365 Copilot, Claude Enterprise (with audit trails)

- Approved for non-sensitive data only: ChatGPT Plus (with user responsibility)

- Not approved: ChatGPT Free, Gemini Free, any tool without audit trails or data residency guarantees

Establish clear consequences:

- First violation: Coaching and education

- Repeated violations: Escalation to manager and security team

- Malicious violations: Disciplinary action

Pillar 4: DSPM for AI – Visibility Into What’s Already Exposed

By the time you deploy context-aware DLP and enterprise AI, some data has already leaked. Employees have already pasted code into ChatGPT. Contractors have already uploaded files to personal cloud storage. Former employees still have access to sensitive systems.

Data Security Posture Management (DSPM) for AI gives you visibility into this exposure.

DSPM answers questions that DLP cannot:

- Which sensitive data is accessible to which users?

- Which data has been shared externally (intentionally or not)?

- Which AI tools have already received sensitive data?

- Which users have excessive access to sensitive data?

- Which data is at highest risk of exposure?

Implementation approach:

- Discover what you have: Scan your data repositories (cloud storage, databases, collaboration tools, SaaS applications) to identify sensitive data

- Classify automatically: Use AI-powered classification to label data by sensitivity (PII, financial data, source code, legal documents, customer data)

- Map access: Understand who can access what data and why

- Identify exposure: Find data that’s been shared externally, stored in personal accounts, or accessible to contractors

- Prioritize remediation: Focus on highest-risk exposure first (e.g., customer data shared with external vendors, source code in personal cloud storage)

Tools to evaluate: Concentric AI (semantic intelligence for unstructured data), Nightfall AI (data lineage and exfiltration prevention), Microsoft Purview (integrated with Microsoft ecosystem).

Putting It Together: A 90-Day Implementation Roadmap

Month 1: Foundation

- Audit current DLP and insider risk tools: What gaps exist?

- Identify highest-risk data types: Where is your crown-jewel data?

- Assess AI tool usage: Which tools are employees using? Which data are they pasting?

- Update data handling policies: Draft new guidance on AI use

- Pilot context-aware DLP: Deploy to one high-risk team (engineering, finance, legal)

Month 2: Enablement

- Deploy enterprise AI tools: Pilot with teams identified in Month 1

- Implement DSPM: Scan your data repositories and identify exposure

- Train managers: Help them understand the new policies and their role in enforcement

- Launch user coaching: When violations occur, educate rather than punish

- Measure baseline: How much sensitive data is currently being pasted into free AI tools?

Month 3: Scale and Optimize

- Expand context-aware DLP: Roll out to all users

- Expand enterprise AI: Make it available to all teams

- Remediate high-risk exposure: Address findings from DSPM

- Refine policies: Based on real-world usage, adjust guidance

- Measure impact: How much has sensitive data exposure to free AI tools decreased?

The Governance Mindset

The organisations that will win in the Shadow AI era aren’t the ones with the most tools. They’re the ones with the clearest strategy.

That strategy rests on a simple principle: Assume employees will use AI. Design controls that let them use it safely.

This means:

- Visibility first: Know what data is where and who can access it

- Enablement second: Provide approved tools that are better than free alternatives

- Guidance third: Tell employees how to use AI responsibly

- Enforcement last: Only block when necessary; coach when possible

Technology enables this strategy, but strategy drives technology. Without a clear framework, you’ll deploy tools that don’t talk to each other, policies that contradict each other, and controls that frustrate employees without protecting data.

With a clear framework, you transform Shadow AI from a crisis into a managed risk.